Google Massively Automates Tropical Deforestation Detection

Landcover change analysis has been an active area of research in the remote sensing community for many years. The idea is to make computational protocols and algorithms that take a couple of digital images collected by satellites or airplanes, turn them into landcover maps, layer them on top of each other, and pick out the places where the landcover type has changed. The best protocols are the most precise, the fastest, and which can chew on multiple images recorded under different conditions. One of the favourite applications of landcover change analysis has been deforestation detection. A particularly popular target for deforestation analysis is the tropical rainforests, which are being chainsawed down at rates which are almost as difficult to comprehend as it is to judge exactly how bad the effects of their removal will be on biological diversity, planetary ecosystem functioning and climate stability.

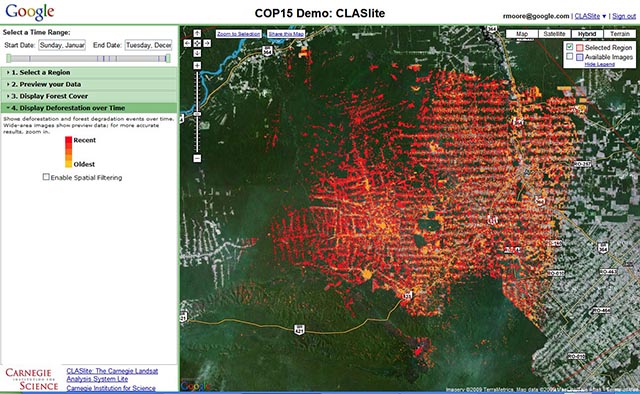

Google has now gotten itself into the environmental remote sensing game, but in a Google-esque way: massively, ubiquitously, computationally intensively, plausibly benignly, and with probable long-term financial benefits. They are now running a program to vacuum up satellite imagery and apply landcover change detection optomized for spotting deforestation, and for the time being targeted at the amazon basin. The public doesn’t currently get access to the results, but presumably that access will be rolled out once Google et al are confident in the system. I have to hand it to Google: they are technically careful, but politically aggressive. Amazon deforestation is (or should still be) a very political topic.

The particular landcover change algorithms they are using are apparently the direct product of Greg Asner’s group at Carnegie Institution for Science and Carlos Souza at Imazon. To signal my belief in the importance of this project I’m not going to make a joke about Dr. Asner, as would normally be required by my background in the Ustin Mafia. (AsnerLAB!)

“We decided to find out, by working with Greg and Carlos to re-implement their software online, on top of a prototype platform we’ve built that gives them easy access to terabytes of satellite imagery and thousands of computers in our data centers.”

That’s an interesting comment in it’s own right. Landcover/landuse change analysis algorithms presumably require a reasonably general-purpose computing environment for implementation. The fact that they could be run “on top of a prototype platform … that gives them easy access to … computers in our data centers” suggests that Google has created some kind of more-or-less general purpose abstraction layer than can invoke their unprecedented computing and data resource.

They back that comment up in the bullet points:

“Ease of use and lower costs: An online platform that offers easy access to data, scientific algorithms and computation horsepower from any web browser can dramatically lower the cost and complexity for tropical nations to monitor their forests.”

Is Google signaling their development of a commerical supercomputing cloud, a la Amazon S3? Based on the further marketing-speak in the bullets that follow that claim, I woud say absolutely yes. This is a test project and a demo for that business. You heard it here first, folks.

Mongobay points out that it’s not just tropical forests that are quietly dissapearing, and Canada and some other developed countries don’t do any kind of good job in aggregating or publically mapping their own enormous deforestation. I wonder: when will Google point its detection program at British Columbia’s endlessly exanding network of just-out-of-sight-of-the-highway clearcuts? And what facts and figures will become readily accessible when it does?

Mongobay also infers that LIDAR might be involved in this particular process of detecting landcover change, but that wouldn’t be the case. Light Detection and Ranging is commonly used in characterizing forest canopy, but it’s still a plane-based imaging technique, and as such not appropriate for Google’s world-scale ambitions. We still don’t have a credible hyperspectral satellite, and we’re nowhere close to having a LIDAR satellite that can shoot reflecting lasers at all places on the surface of the earth. Although if we did have a satellite that shot reflecting lasers at all places on the surface of the earth, I somehow wouldn’t be surprised if Google was responsible.

Which leads me to the point in the Google-related post where I confess my nervousness around GOOG taking on yet another service — environmental change mapping — that should probably be handled by a democratically directed, publically accountable organization rather than a publically-traded for-profit corporation. And this is the point in the post where I admit that they are taking on that function first and/or well.